Containers website assignment

At Mozilla we have been working on a feature called containers, which gives users the ability to separate their lives online to prevent being tracked.

Since launching containers in Nightly and now in Test Pilot, the most requested feature for containers was the ability to assign a website to a container. When a user assigns a website to a container the browser will then load that website in said container whenever the website is requested.

To get the features mentioned in this article download Containers in Test Pilot

How it works

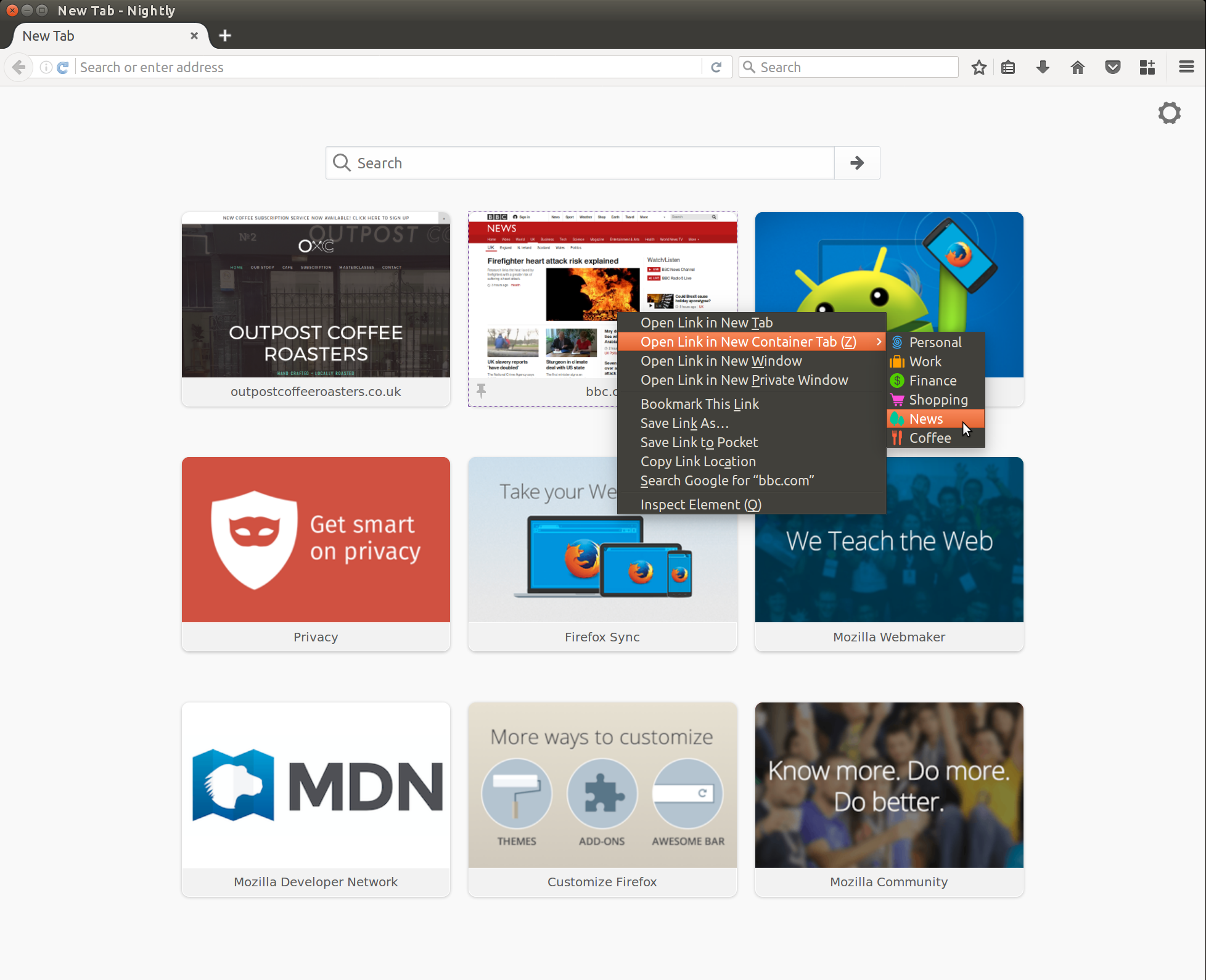

Launching today you will be able to assign websites to your containers using the following flow:

Open a bbc.com in a News container:

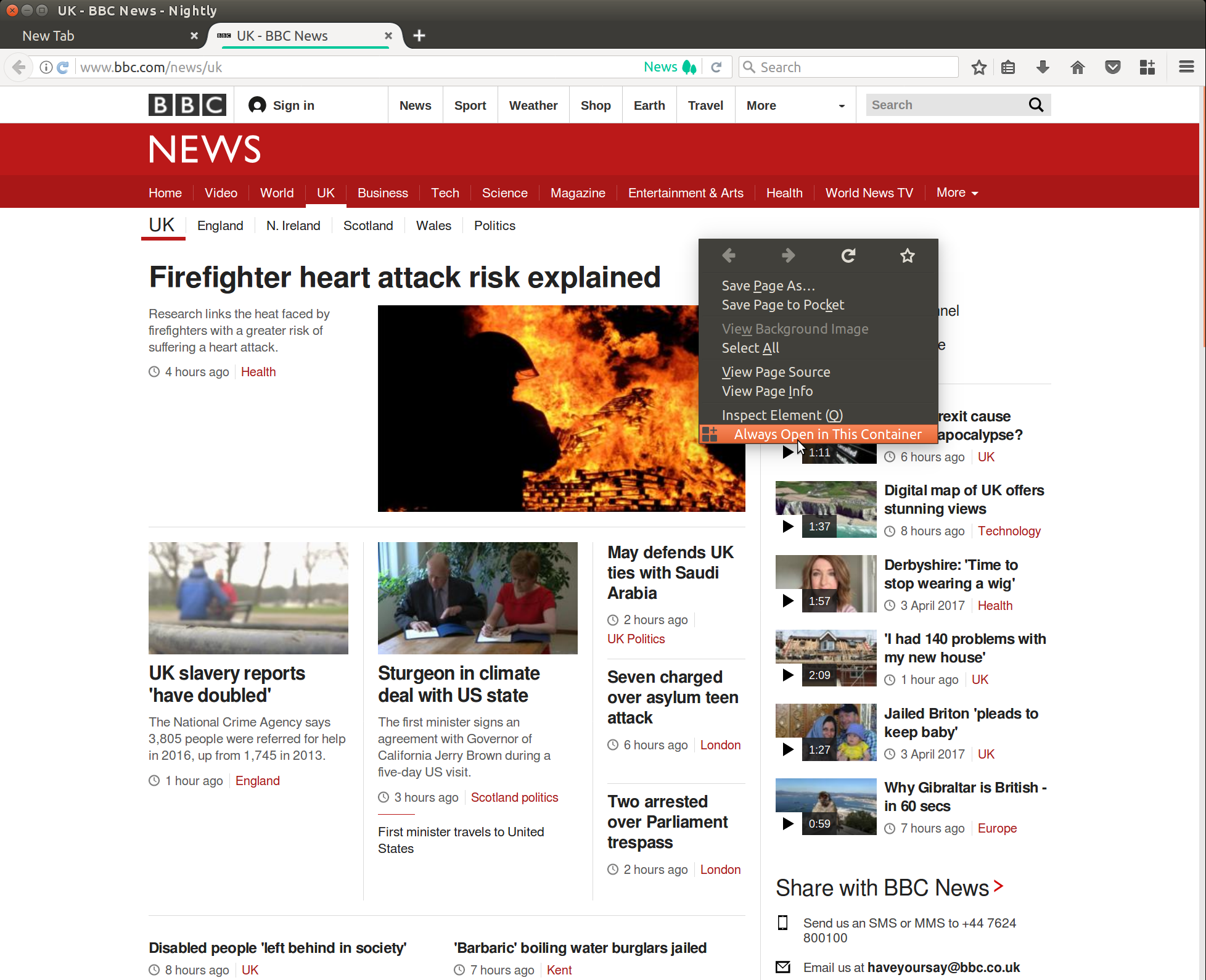

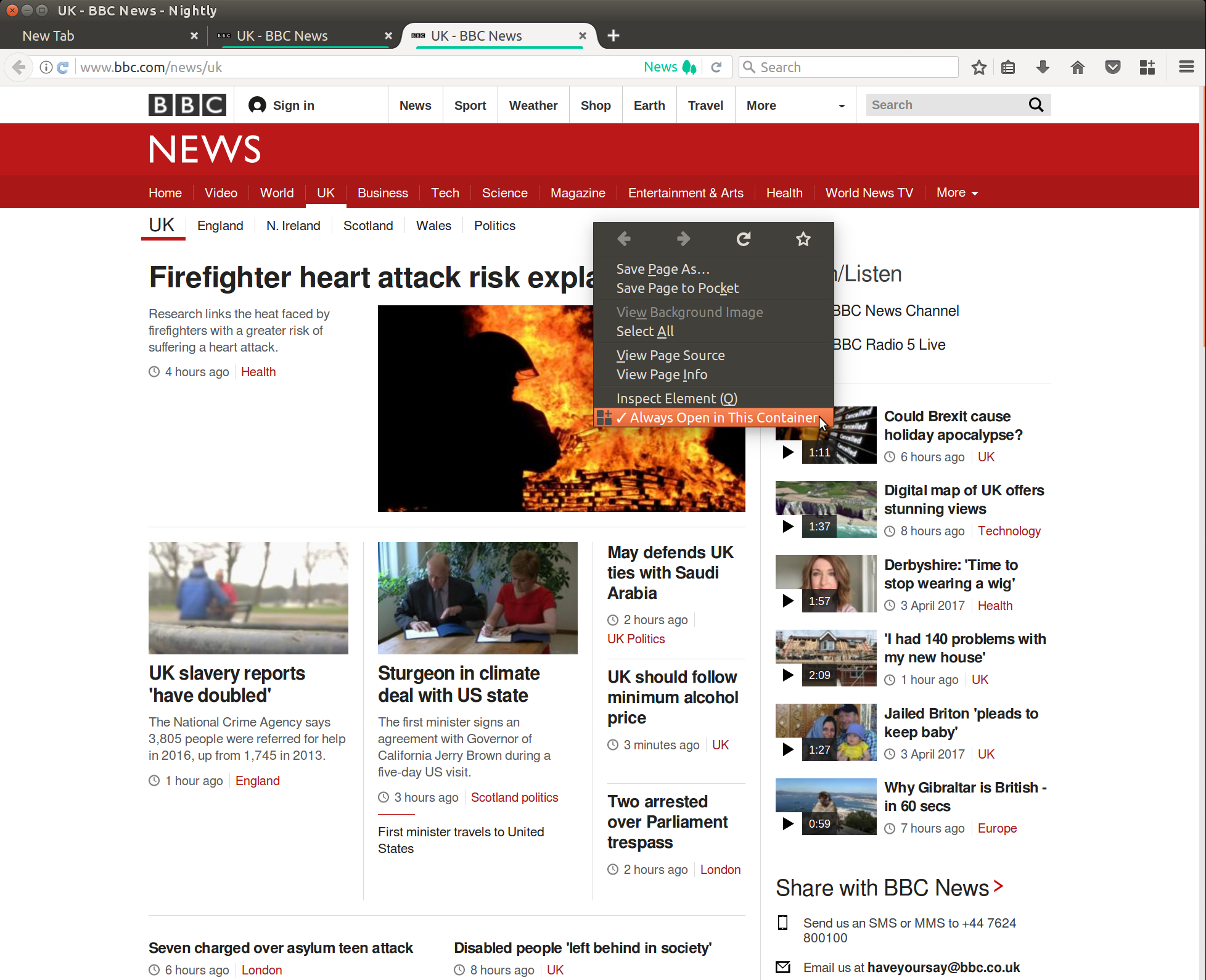

Assign the container to the link by picking "Always Open in This Container":

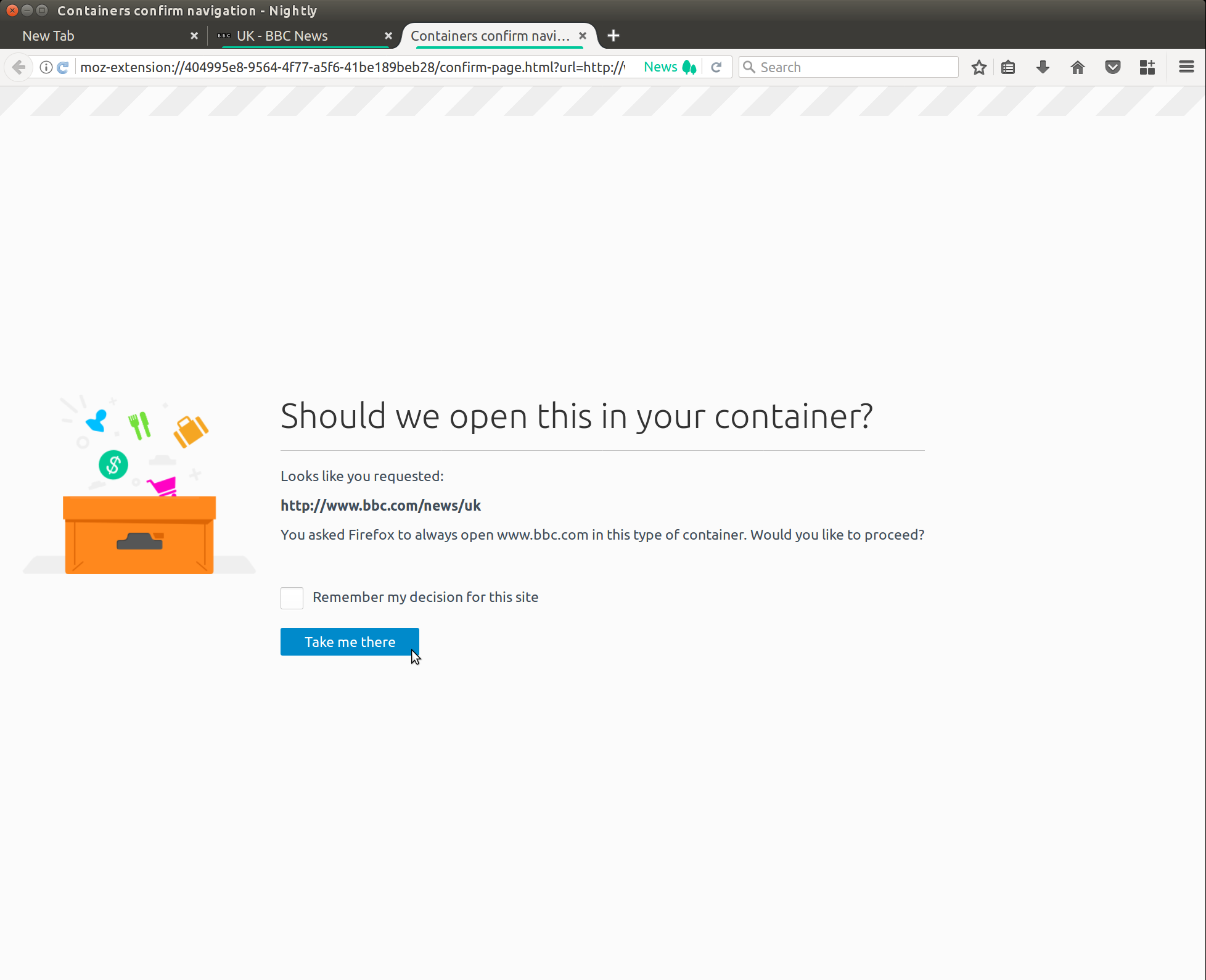

When you click a link to bbc.com you will see this prompt asking you to confirm opening in the container you asked for:

When you want to remove the assignment use the same context menu:

Using the example we can see how a user will be able to prevent their browsing history being shared from advertisers, news and shopping sites whilst only adding minimal effort to their browsing flow.

Hard decisions

For a long time we put off implementing this feature due to it's user interface complexity and we likely will still have to tweak it after we launch. The feature itself wasn't very complex, with the code being only ~300 lines of code.

I was initially hesitant to implement this feature due to the following three issues:

- The ability to CSRF yourself.

- The inability to use more than one container for a website.

- The inability to grasp why containers are isolated.

1. The ability to CSRF yourself

Websites often have paths or pages that supply capabilities that other pages can call. These capabilities could be paying someone or resetting a password. A Cross Site Request Forgery is an attack when a user unknowingly visits one of these paths without intending to. CSRF exploits usually require that a user is already authenticated to a website and then unknowingly gets sent to one of these capability URLs that performs a privileged action on the authenticated users behalf.

For example:

- User visits example.bank.

- User logs in.

- User then visits evil.example.com.

- evil.example.com redirects the user to example.bank/makepayment?username=evil&amount=1000 in an hidden frame that they can't see.

- Assuming example.bank doesn't protect against CSRF the user will have the money taken from their account.

There are lots of ways a website can protect users from these forms of attacks, however in reality they still exist on the web today.

Containers actually fix this issue so long as you log into services within a new container, lets take another look at that example:

- User opens a Finance container and logs into example.bank.

- User visits bad.com in the default "No container" new tab.

- bad.com redirects user to example.bank/makepayment?username=evil&amount=1000 in an hidden frame that they can't see.

- example.bank in "No container" isn't logged in and doesn't know about the user so refuses to pay evil user.

Now if we had assigned the bank to go into the Finance container we would be not protected either.

Furthermore single use capability URLs can actually leak in the referrer header too.

2. The inability to use more than one container for a website

Users won't get the advantage of having multiple mail clients in different containers (Work and Personal mail)

3. The inability to grasp why containers are isolated

My other issue with container assignment would be that a user wouldn't see the immediate advantage of using containers if they always get teleported into a certain container.

Users might suffer from their search engine and mail provider all being lumped into the same container. This would mean the website could still track across all these properties. One of the design goals of containers was to limit the amount of tracking users had whilst browsing the internet and helping users shatter their filter bubble.

If a website can force a user into a container for another site they potentially can continue to track the user.

Lets take a look at an example of a staff member leaking his information across the web:

- User visits https://work.example.com in a work container and assigns it to always open in work.

- User opens a new tab and opens https://example.clinic.

- User searches on example.clinic for something they don't want their work to know about.

- horrible.example.marketing sends a cookie to the browser with a unique identifier "hey-we-heard-you-had-medical-issues".

- https://example.clinic forces the user to load https://work.example.com/?tracking-id="hey-we-heard-you-had-medical-issues".

- Browser redirects work.example.com "No container" into a work container with the same path.

- horrible.example.marketing script is also loaded on work.example.com domain which resends a cookie based upon the GET tracking-id parameter.

- horrible.example.marketing are now able to link together the two sites across a container.

- work.example.com decides to not promote staff that may push up insurance premiums.

Whenever you assign an origin into a container you risk leaking your browsing history like this unsuspecting person did. This might seem extreme but there is however evidence to suggest medical information often leaks from one website to another.

How we solved these issues

- We decided to warn users with a prompt which can be dismissed with a checkbox.

- I also prevented form submission across containers unless they are using a GET method.

- GET method forms shouldn't have capabilities like withdrawing money.

- This will cause some breakage however it should prevent the worst of these attacks.

-

Issue two is currently left unresolved due to wanting the users to have the ability to hide the warning.

-

After checking out many URLs we decided that websites still often split their services enough by subdomain that we could assign containers based upon the Same Origin Policy.

- This means mail.example.com and www.example.com would be treated differently.

- Assigning sites based upon the Same Origin Policy also gave us isolation against HTTP based attacks. Unless users had explicitly assigned the insecure version too, however we had to decide if users would want this behaviour...

Deciding on HTTP website assignment

We had a hard balance between separating HTTP vs HTTPS assignment of a website.

Advantages

- This would prevent http://example.bank/makepayment?username=evil&amount=1000 redirecting to https://example.bank/makepayment?username=evil&amount=1000.

- Which would harm some websites that don't use a redirect however we now provide insecure password warnings for these so users should be less tempted to login to the HTTP version of a website now.

- Further down rating of HTTP traffic.

Disadvantages

- Users might click a link to a HTTP version of the website expecting not to have their history leak into the container they are currently in.

- Users are unsure why they have to assign both HTTP and HTTPS, this wouldn't be easy to explain in UI.

- Display of the protocol for both https://example.com and http://example.com would add further UI barriers.

Decision

Despite the risk of HTTP over insecure networks we decided that there was enough websites this would break right now and the usability disadvantages were pretty bad. The containers project as a whole has been a hard balance of usability and stricter security settings. There are already places where containers are imperfect for preventing tracking such as:

- OCSP

- HSTS flags see HSTS supercookies for tracking technique.

- Security exceptions for invalid certs.

Containers isn't considered the most robust security and privacy measure we have within the browser, it's a compromise of usability and robust security. At risk users should consider the patches Mozilla is working on as part of the Tor uplift project namely "first party isolation" and the anti fingerprinting techniques Tor browser uses.

Outstanding questions

One of the advantages of us running the test pilot experiment is it allows us to measure the usage of containers to gauge how people are using it, we often struggle to do wild experiments within Firefox like this because we can't gather as much information as we likely would want.

- Do users end up with too many of the same containers?

- Should we remove the ctrl/cmd + click links from being in the same container?

- With the ability to assign websites to be in a container, opening links in a new tab likely should be in "No container" to reduce the leakage of authenticated internet back into third party sites.

- Should we use instead/as well use

rel="noreferrer",rel="noopener",rel="nofollow"links be treated as an indicator for a "No container"?- When a website indicates it isn't a strong relationship to each other perhaps we should consider it to be a different container.

Feedback and bugs welcome

Feedback is always welcome at [email protected] where all the other developers involved in the experiment and the underlying platform changes will be notified.

Feel free to reach out on twitter @KingstonTime

I want to highlight, despite this being a feature I worked on many others have been involved with the experiment.

The team responsible for getting the Test pilot live:

Existing platform team responsible for working on getting containers and origin attributes into Nightly.

- Andrea Marchesini

- Bram Pitoyo

- Christoph Kerschbaumer

- Dave Huseby

- Ethan Tsengo

- Jonathan Hao

- Kamil Jozwiak

- Paul Theriault

- Steven Englehardt

- Tanvi Vyas

- Tim Huang

- Yoshi Huang

Past Research:

- http://www.ieee-security.org/TC/W2SP/2013/papers/s1p2.pdf

- https://web.archive.org/web/20161107220901/https://blog.mozilla.org/ladamski/2010/07/contextual-identity/

Carolyn Jaeger who created the stunning gif in this article.

I'm sure many others have been missed too!